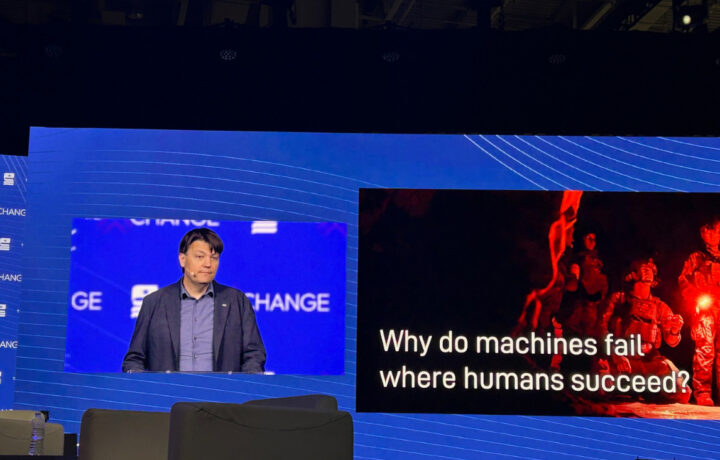

At the Special Competitive Studies Project AI+ Expo, one of the more thought-provoking conversations about the future of artificial intelligence didn’t focus on bigger models or faster compute. Instead, Defense Advanced Research Projects Agency Program Manager Eric Davis challenged the audience to rethink what makes AI truly useful in high-stakes environments.

His message was simple, but significant: the future of AI in national security is not just about intelligence. It’s about teamwork.

Today’s AI, Davis argued, behaves like “the perpetual junior colleague.” It can process enormous amounts of information, generate responses, and complete tasks with impressive speed. But when it comes to adapting to human intent, understanding team dynamics, or operating effectively in crisis situations, current systems still fall short.

That gap matters in national security environments where teams are regularly forced to make decisions in unfamiliar or rapidly changing situations.

Davis centered much of his talk around the military concept of “self-synchronization,” the ability for warfighters to make the right decisions even when disconnected from leadership or operating in unfamiliar situations. The reason teams succeed in those moments is not because every scenario was pre-programmed. It’s because humans learn each other over time. They understand intent, priorities, emotional reactions, and how teammates operate under pressure.

“You can’t pretrain relationships,” Davis said in the discussion.

That point cuts directly against much of today’s AI conversation. Large language models can predict the next word in a sequence with incredible sophistication, but they do not actually know the individual human they are supporting. They operate on generalized models built from massive data sets, not personalized understanding.

For defense and intelligence applications, Davis argued that creates real operational challenges.

He warned that in crisis situations, humans already struggle with limited working memory and “attention tunneling,” where focus narrows so tightly that important information gets missed. Instead of reducing that burden, poorly integrated AI teammates can actually increase it by forcing humans to constantly monitor and compensate for machine shortcomings.

One of the more compelling parts of the talk was Davis’ focus on emotional intelligence as a measurable and essential capability for both humans and machines. He cited research showing that the most effective teams are not determined by raw intelligence or even the smartest individual in the room. Instead, team success is driven largely by emotional intelligence: the ability to understand others, anticipate reactions, regulate emotions, and adapt collaboratively under pressure.

That idea may sound unusual in a defense technology conversation, but Davis argued it is foundational to the next phase of AI development.

“We should be investing in emotions mathematically,” he said, pointing to emerging DARPA research into “affective reasoning” and emotional AI.

Rather than viewing emotions as irrational or secondary, Davis framed them as core decision-making systems that help humans prioritize goals, navigate uncertainty, and work effectively together. He noted that medicine, education, neuroscience, and military training have long recognized emotional regulation and interpersonal understanding as critical skills. AI development, however, has largely focused on cognitive capability alone.

That imbalance, he argued, limits what machines can ultimately become.

The broader warning from the DARPA program manager was that the industry may be over-investing in one path of AI advancement while ignoring others that could prove transformational. Scaling models larger and larger may improve language prediction, but Davis argued that intelligence is not just language. Human cognition also relies on memory, attention, emotional processing, inhibitory control, timing, and coordination.

“The brain is not a language model,” he said. “The brain has a language center.”

For cleared professionals, engineers, AI researchers, and defense contractors, the conversation offered a different lens on where AI development could be headed. Davis argued future AI systems will need to function more like teammates than simple tools. That means adapting to specific operators, mission sets, and leadership styles in ways current frontier models cannot.

He also pointed to earlier research showing that AI systems with limited emotional teaming capabilities significantly outperformed more technically accurate systems that lacked those interpersonal traits. The reason, he argued, was that humans worked more effectively with them.

In discussions around human-machine teaming, that distinction matters.

The talk also reflected a broader theme emerging in defense AI conversations. While much of the commercial market remains focused on automation and scale, researchers inside DARPA are also examining trust, adaptability, and coordination between humans and machines.

The discussion suggested that simply scaling larger models may not be enough to achieve the next leap in AI capability.

Instead, Davis encouraged the audience to explore what he repeatedly called “the roads not taken” in AI research. For DARPA, that includes investing in systems that better understand teamwork, communication, emotion, and the complex ways humans coordinate under pressure.